Hexicurity's Goal: Using AI and Vision to Improve Wire-Crimp Quality

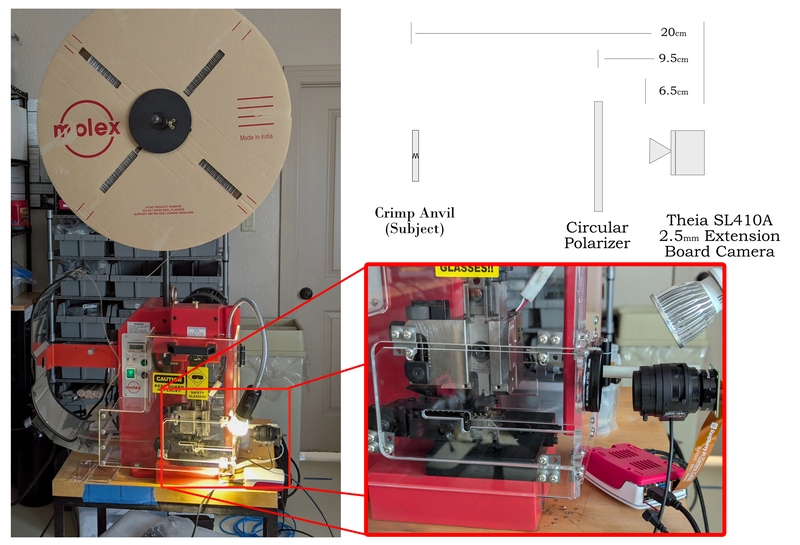

Figure 1: An imaging solution allows employees to have an unobstructed view of the wire positioned on an automated crimping machine.

Hexicurity's Goal: Using AI and Vision to Improve Wire-Crimp Quality

Hexicurity is developing an AI-enabled vision system to improve wire crimp quality in its security access products, reducing faults and increasing reliability through machine learning and advanced imaging components.

Written by Linda Wilson, Editor-in-Chief Vision Systems Design | 1/12/26, 5:45 PM | Originally published on Vision Systems Design | Used with permission

What You Will Learn

- Hexicurity is integrating machine learning with vision systems to automate wire crimp manufacturing and quality assessment in its security hardware products.

- The imaging setup includes Raspberry Pi cameras, STM32 microcontrollers, and specialized lenses to provide clear, glare-free images of wire crimps.

- The system is designed to operate outside the safety plexiglass, and it provides unobstructed views and improved process flow for operators.

- Moving inference to edge devices like the STM32N6 microcontroller aims to enhance security, reduce cloud dependency, and lower operational costs.

Hexicurity (Boerne, Texas, USA), a manufacturer of security access integration solutions for commercial doors, turnstiles, and elevator systems, is developing an AI-enabled vision system to improve the quality of wire crimps in one of its products, called TransVerify.

TransVerify is a networking solution that integrates the badging security systems of individual tenants in a multi-tenant building or campus. This allows employees of the various tenants to access both the building and the office suite with a single badge. “We offer a measure of privacy to the corporations. They don’t have to release their entire employee list to the base building,” explains George Mallard, vice president of engineering for Hexicurity.

The Hexicurity system contains hardware and software in one self-contained unit. Describing it as an IoT-type “appliance,” Mallard says, “It is set up, and it runs for years, so reliability of the equipment is paramount.”

Hexicurity manufactures wire crimps for the verifier and distributor components that are then integrated into the TransVerify system.

Manual Wire-Crimping Process

Each TransVerify system contains about 100 wire crimps, which Hexicurity produces using a precision crimping machine from Molex (Lisle, IL, USA).Operators manually position a wire on the machine for each crimp—a process they repeat several hundred times per day—and then trigger the crimp process with a treadle.

However, the design of the machine partially blocks the operators’ view of the crimp point, making it difficult for them to position the wire precisely.

While the company has had only two instances of faulty wire crimps in its history, Hexicurity’s managers are developing a semi-automated process using a machine learning-based vision system to trigger the go/no-go decision to crimp a wire. Operators will still position the wire manually and press a treadle to initiate the crimping action.

Adopting Industrial Automation Using Machine Vision

Mallard says the company built a machine learning (ML) model to evaluate the position of the wire on the Molex crimping machine. After tweaking the model, Hexicurity will test it.

In a later phase of crimping automation, Hexicurity plans to develop a second algorithm to evaluate the quality of the crimp after it is completed, leading to an accept/reject decision.

Even without the machine learning capabilities, the Hexicurity team installed the components as an interim imaging solution, allowing employees to have an unobstructed view of how the wire is positioned. “Right now, it’s just an imaging system. It throws an image up on the screen so that the operator can see it, ”Mallard says, adding that the use of an imager has already improved crimping process flow and production cycle time.

Machine Vision Imaging System

The Hexicurity team designed the system using numerous components including:

- Raspberry Pi 4K camera from Raspberry Pi Foundation (Cambridge, United Kingdom)

- STM32N6 ARM microcontroller from ST Microelectronics (Geneva, Switzerland), which integrates an ARM processor with a proprietary Neural-ART accelerator

- SL410A 4-10 mm varifocal lens from Theia Technologies (Wilsonville, OR,USA)

- 52 mm circular polarizer lens filter from Amazon (Seattle, WA, USA)

- PAR16 LED bulb, which replaces the incandescent light that came with the Molex machine and provides enough illumination to produce good images

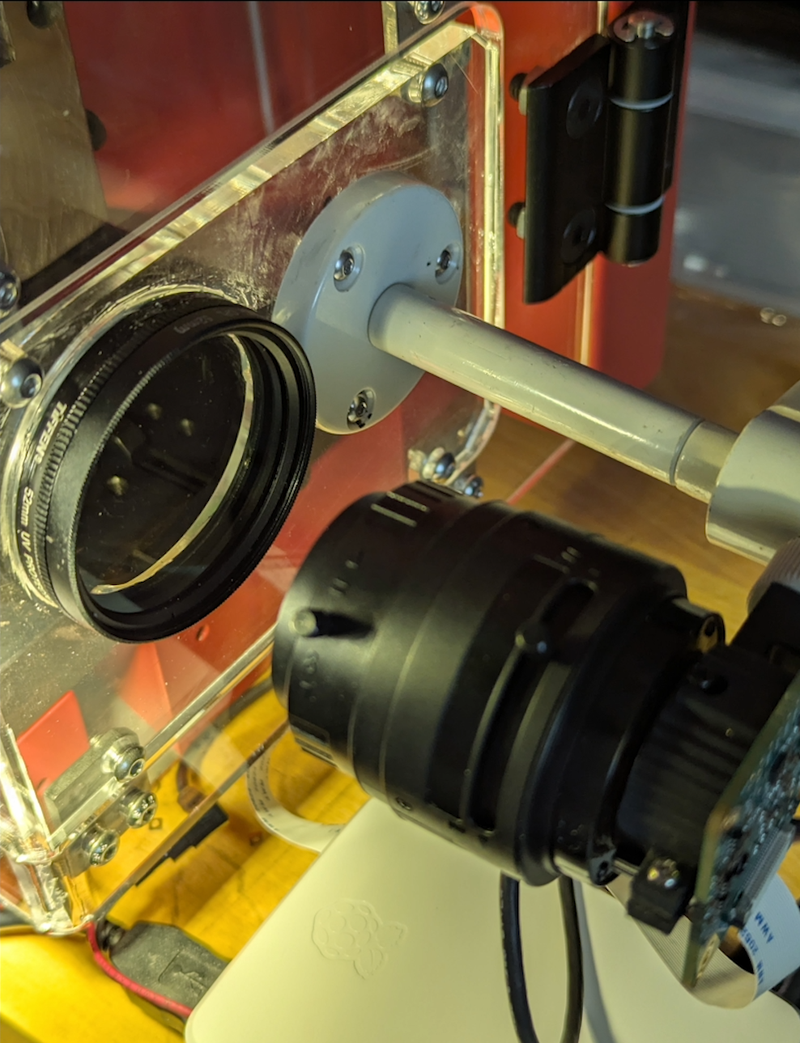

Because the imaging system is placed outside the safety plexiglass surrounding the Molex machine, Hexicurity cut a hole in the plexiglass, providing the system with physical and visual access to the crimp point. The company then placed the circular polarizer inside the hole and positioned the Theia lens and Raspberry Pi camera, which is attached to the STM32N6 ARM, on the other side of the hole. The lens is set at 6 mm focal length.

Mallard says the circular polarizer helps cut glare caused by the shiny and reflective surfaces such as metal objects.

He chose the Raspberry Pi camera because it allows him to set and then lock the distance between the lens and the camera’s sensor. “We were able to back the lens out far enough to turn that Theia lens into a macro lens, where we could go six inches out and get a sharp image,” Mallard explains.

The lens from Theia is not only helpful for the interim imaging-only phase of the project but also in the upcoming machine learning-enabled vision phase. Mallard says the “flat” FOV of the lens helps reduce computational effort because the “machine learning algorithm can skip image de-warping, and dropping this step accelerates inference.”

Building A Machine Learning Algorithm

As of December 2025, Mallard and team were refining the machine learning-based workflow, running on rented Nvidia (Santa Clara, CA, USA) GPU clusters in the cloud.

The team produced a model of the “perfect” crimp based on “a bunch of ‘good’ images captured in-situ by the camera and optics that are going to be used in production,” Mallard says.

For the inferencing, or the production stage, Mallard wants to move the entire ML-enabled vision system to the edge.

Mallard says that the combination of the processor from ST Microelectronics and the lens from Theia should allow the team to move the inference image processing from the cloud to the ST Microelectronics’ STM32N6. “All of this depends upon how fast the STM32N6 can produce confidence scores” on the output from the algorithm. “If we can move to the edge, this becomes a giant win for security, network traffic and most importantly cloud computing costs,” he adds.

Figure 2: The imaging system includes a circular polarizer to cut glare and a lens set at a6 mm focal length.

///

Written by Linda Wilson, Editor-in-Chief Vision Systems Design | 1/12/26, 5:45 PM | Hexicurity's Goal: Using AI and Vision to Improve Wire-Crimp Quality | Originally published on Vision Systems Design | Used with permission