Key Considerations in Lens Selection

Optics are critical to the success of imaging systems across industries and applications, including video surveillance, intelligent transportation systems, machine vision, and more- and the principles of lens selection are critical to that success. These principles are discussed below as they relate to machine vision, but are applicable to other industries as well.

In machine vision, robotics, and factory automation, imaging systems are central to precision, repeatability, and real-time decision-making. These systems are deployed across semiconductor inspection, electronics assembly, automotive manufacturing, food processing, and many more, where performance depends on accurately capturing and interpreting visual data.

Overall system accuracy depends on the entire imaging chain: lighting, cameras and sensors, optics, data transfer, and software processing. Among these, the lens plays a uniquely critical role: it determines how sharply the image is captured by the sensor, ultimately setting the upper limit on image resolution and measurement accuracy.

A poorly selected or mismatched lens can undermine even the most advanced system, introducing distortion, reducing contrast, and limiting resolution. Conversely, a high-quality lens captures detail with consistent fidelity, ensuring downstream processing has accurate data.

Key lens selection considerations include sensor compatibility, resolution performance, system geometry, optical distortion and aberrations, light sensitivity, spectral performance, focus control, calibration, and environmental robustness. Explore answers to common lens selection questions below...

Learn more about Theia’s related lens solutions at these links:

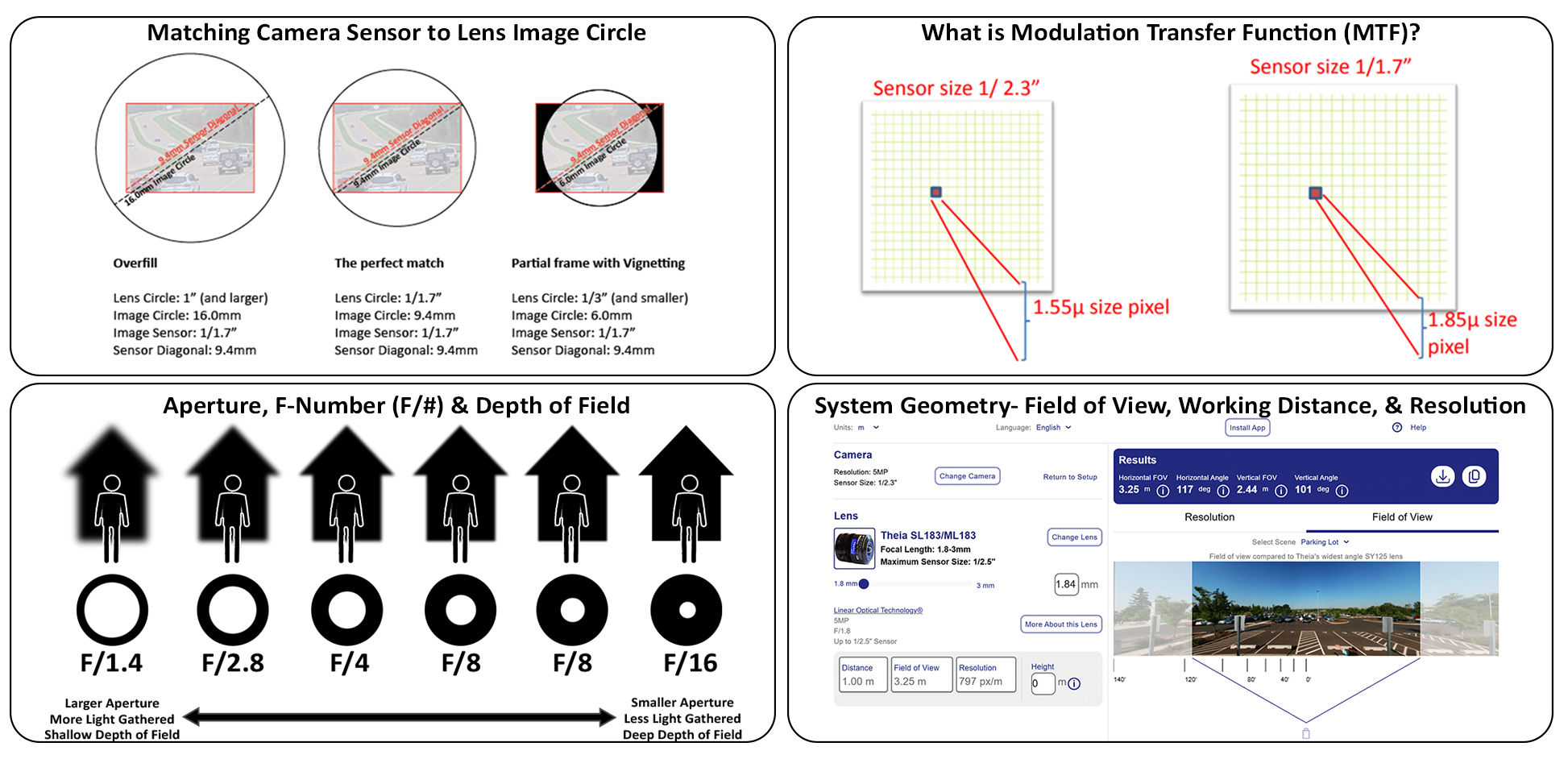

1. How do I match a lens to my camera sensor size?

Matching the lens' image circle diameter (expressed in units) to the camera sensor diagonal (expressed in the same units) is fundamental to capturing the full image.

2. How do I determine if my lens is compatible with my camera’s sensor?

Matching the lens mount type to the camera sensor mount type as well as matching the lens' image circle (mm) to the camera sensor diagonal is vital to ensuring your lens is fully compatible with your camera's sensor.

If you know your camera's sensor size, you may test your lens and camera sensor combination with For more information, visit: Theia's Lens Calculator and Resolution Simulator where you can determine if your camera sensor matches your lens circle and see the resulting field of view and resolution and download the results.

3. What happens if a lens image circle doesn't match my camera sensor?

If the image circle is much larger than the sensor diagonal the result is Overfill, which doesn't necessarily matter, but in applications where camera/lens size matters, you may have a larger-than-necessary lens. However; if the image circle is less than the sensor diagonal, you will get vignetting where portions of the sensor will not receive light, resulting in dark corners.

The only way to avoid vignetting is to ensure that the lens circle is equal to or larger than the camera sensor's diagonal.

Proper lens selection is the foundation of high-resolution performance. Even the most advanced sensor cannot deliver meaningful detail if the lens fails to project sharp, high-contrast images.

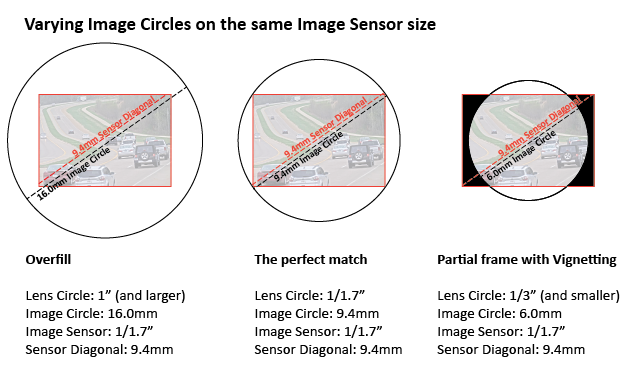

1. How does sensor pixel size affect lens selection?

A sensor’s pixel structure, defined by the size and arrangement or density of individual pixels on the sensor, determines how much detail can be captured. Pixel pitch, or the distance between pixel centers, determines the smallest detail the sensor can detect. Smaller pixels allow finer detail capture but collect less light, reducing sensitivity. Larger pixels improve light collection at the cost of fine resolution.

2. How do I calculate pixel density for industrial lenses?

Pixel density for industrial imaging tends to be calculated by pixels per mm, rather than pixels per inch due to the smaller and more compact sizes of lenses and cameras being used. It is calculated by dividing the Sensor Resolution (Horizontal x Vertical Resolution, or total number of pixels), by the Field of View (FOV). Example: A 12000-pixel camera covering a 200mm field of view yields 12000/200 = 60px/mm pixel density

Learn more here: Whitepaper: Keys to Delivering on the Promise of 4K Video

3. What is Modulation Transfer Function and why is it important?

MTF, or Modulation Transfer Function, is an indicator of lens resolution. It is typically plotted as a curve of contrast versus spatial frequency. As line spacing decreases (i.e., spatial frequency increases) on a test object, it becomes increasingly difficult for the lens to maintain contrast between the lines in the image, and MTF contrast correspondingly decreases.

High MTF contrast at high spatial frequencies indicates that a lens can maintain strong contrast in fine details, critical for defect detection, measurement, and classification.

Lens MTF describes the optics’ ability to preserve contrast as the scene is imaged onto the sensor, while sensor MTF reflects how accurately the pixel array samples that image. Sensor MTF is limited by and equal to 1/(2*pixel pitch).

4. How does pixel size and lens MTF affect image resolution?

Even a high-resolution sensor cannot recover contrast lost due to a poor lens, and a high-MTF lens paired with a low resolution sensor is similarly limited. System resolution depends on both lens and sensor performance; the lens must have sufficient resolving power to take full advantage of the sensor’s pixel density.

In one example of system resolution, using a pixel pitch of 1.55μm results in an MTF greater than 300 line pairs per mm (lp/mm). To achieve the optimal system resolution, the required MTF of the lens should match the MTF of the 300 lp/mm sensor.

Applications such as semiconductor and PCB inspection, as well as OCR/barcode recognition are highly sensitive to these factors, where fuzzy features or lost edge sharpness can result in missed defects or recognition errors.

Learn more about Theia’s high resolution lens solutions at these links:

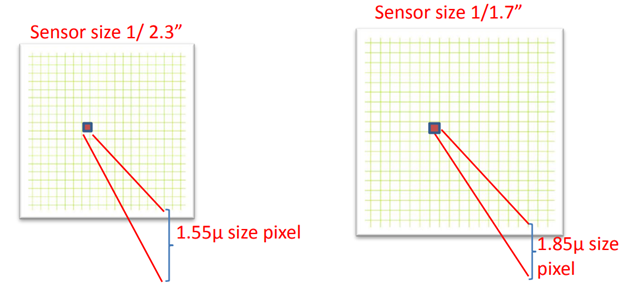

1. How do field of view and working distance affect resolution?

Field of view (FOV) and working distance are critical in lens selection because they determine how much of the scene is captured and at what resolution.

Focal length and working distance set the FOV, which directly impacts pixel density on the object. As working distance increases, objects occupy fewer pixels on the sensor, reducing effective resolution.

For a fixed working distance, changes in focal length determine how the native pixels from the sensor are spread across the image, setting the pixel density of the image and the ability to distinguish details.

In conveyor inspection, for example, the lens must capture the full product width while resolving small defects. Too wide a field of view reduces detail; too narrow risks missing critical regions. In robotic applications such as bin-picking or autonomous navigation, working distance may vary, requiring consistent focus and resolution to ensure reliable detection.

Learn more here: Understanding the Trade Off Between Image Resolution and Field of View

2. How do I determine the right lens for my working distance and FOV, and how will I know if my selected lens, and camera/sensor will provide enough image resolution considering my physical setup?

Tools like Theia Technologies’ award-winning Theia's Lens Calculator and Resolution Simulator help system designers evaluate trade-offs by estimating pixel density and field of view based on camera sensor size and resolution, lens parameters such as focal length, and system geometry including distance, height, and angle.

This enables systems designers and engineers to quickly assess whether a given configuration meets the requirements of the application.

For more information, visit: High Resolution Lenses

Whitepaper: 4K Delivers More Pixels on Target

Whitepaper: Keys to Delivering on the Promise of 4K Video

Whitepaper: 4K Motorized Telephoto for High-Speed Tolling and Traffic Enforcement

Whitepaper: What's a Megapixel Lens and Why You Need One

Case Study: Deep Learning OCR Powered by Theia ML610M Lens

Case Study: Theia Lens Enables Automation via Machine Learning

1. What is lens distortion and how does it affect measurement accuracy?

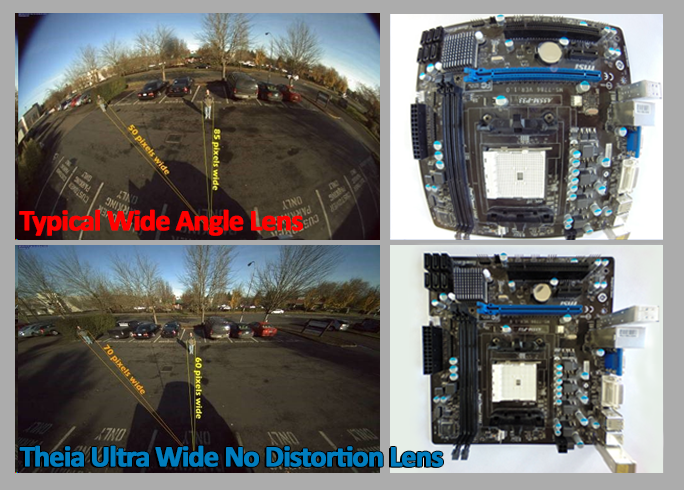

Optical distortion and aberrations directly affect image quality and measurement accuracy. Wide-angle lenses often introduce barrel distortion, curving straight lines and reducing usable resolution at the edges. This distortion produces a fisheye effect and can impair object detection and situational awareness in robotics and autonomous mobile vehicles.

Learn more here: Eliminate Distortion in Wide Angle Imaging for Machine Vision Systems | 日語 | 한국어

2. Fisheye vs. rectilinear lenses: which is better for machine vision?

Rectilinear lenses correct distortion optically, preserving straight lines and maximizing edge resolution without software correction or added latency. They are preferred in real-time systems, such as bin picking or autonomous mobile robots, where immediate decision-making is critical.

Lenses like Theia’s ultra-wide, no-distortion lenses, featuring patented Linear Optical Technology®, are widely used in machine vision for their high resolution, wide fields of view, low distortion, and real-time imaging capabilities essential to demanding machine vision applications.

Learn more here: How to Calculate Image Resolution in Rectilinear Lenses | 日語 | 한국어

3. What are telecentric lenses used for?

In precision metrology applications, even small amounts of distortion can lead to measurement errors. Telecentric lenses are commonly used in these cases because they eliminate perspective distortion and maintain constant magnification regardless of object distance.

4. What are aberrations in imaging and how are they prevented?

Other optical distortions such as chromatic aberration (which causes color fringing), stray light, and ghosting reduce contrast and can interfere with feature detection. Advanced optical designs mitigate these effects using aspherical elements, low-dispersion glass, and high-performance coatings, which are critical in industrial applications even if not fully specified in datasheets.

For more information, visit: Ultra Wide No Distortion Lenses

Video: Ultra Wide No Distortion Lens Overview

Case Study: Ultra Wide, No Distortion Lenses Tough Enough for Crash Testing | 日語 | 한국어

Case Study: Imaging 100-Year-Old Shipwrecks Under 800 Feet of Water

Whitepaper: Improving Resolution with Wide-Angle Lenses

1. What is F/# and how is it calculated?

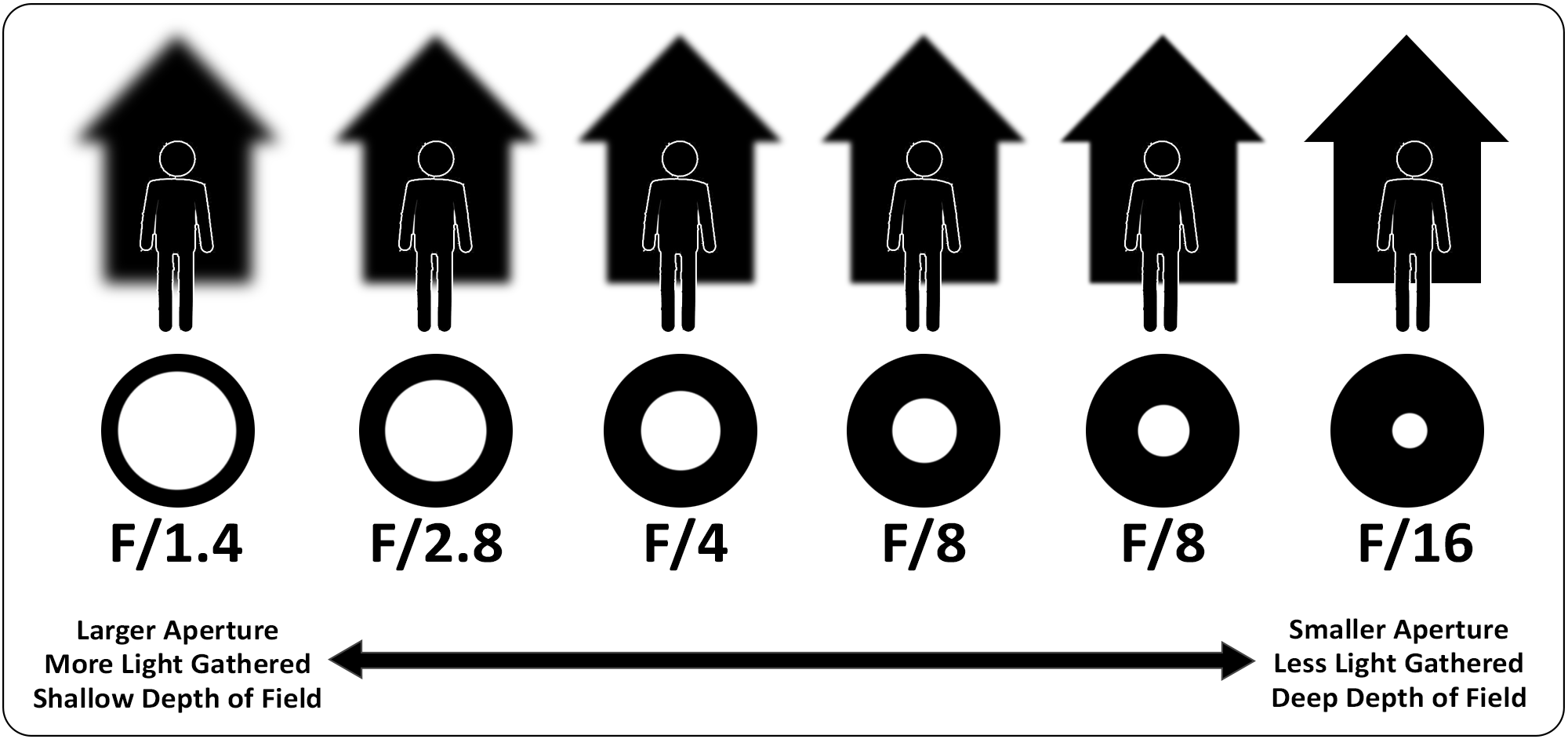

When selecting a lens, an important consideration is the F-number (F/#) which is defined as the focal length divided by the aperture diameter (F/# = focal length ÷ aperture).

For example, at a 12 mm focal length, a 9 mm aperture results in approximately F/1.3, while a smaller 3 mm aperture results in F/4, significantly reducing the amount of light reaching the sensor. In general, the lower the F-number, the more light is transmitted through the lens.

2. What F/# do I need for my lens and application?

The F-number of a lens determines how much light reaches the sensor and how the system balances sensitivity with optical performance. A lower F-number increases light collection, enabling faster shutter speeds required for high-speed inspection systems.

However, this comes at the cost of reduced depth of field and the potential introduction of optical aberrations. Conversely, higher F-numbers improve depth of field but reduce light throughput, requiring stronger illumination or longer exposure times.

3. Why is selecting the proper F/# important?

Selecting a lens with an appropriate F/# ensures the system can achieve the necessary balance between light sensitivity, sharpness, and depth of field under real operating conditions. This tradeoff is fundamental to maintaining consistent image quality and reliable inspection performance.

1. What lighting considerations go into lens selection?

Lens selection must account for the spectral range of the imaging system, as optical performance is highly dependent on wavelength. Choosing a lens designed for the intended illumination spectrum is essential to maintaining focus accuracy and image clarity.

2. What is an IR-corrected lens and do I need one?

Standard lenses optimized for visible light are simpler and less expensive than IR corrected lenses but may not perform correctly outside the visible range. In systems using near-infrared (NIR) illumination, selecting a lens which is not designed for the NIR range of light can introduce focus shift when switching between visible and IR light, resulting in fuzzy images.

IR-corrected lenses are specifically designed to bring multiple wavelengths to the same focal plane, preserving sharp focus across varying lighting conditions without refocusing.

Learn more here: Whitepaper: Day / Night Demystified

3. How do lenses perform in multispectral or hyperspectral imaging?

In multispectral and hyperspectral imaging applications, such as food inspection or material sorting, lens selection becomes even more critical. These systems rely on multiple wavelengths to identify material properties and detect contaminants. The lens must maintain consistent focus and minimal aberration across a wide spectral range to ensure accurate classification and detection.

The design and production of hyperspectral lenses which perform well simultaneously from the visible into the short wave infrared (SWIR) range requires specialized materials and coatings which greatly increase the lens cost. For applications that can rely on imaging in a narrower wavelength range more and less expensive lenses are also available.

1. Why should I use a motorized or calibrated lens?

When selecting a lens for dynamic or automated environments, motorization and calibration capabilities can directly influence system flexibility and performance. Modern machine vision systems often require the ability to maintain image quality across variable working distances and changing fields of view without manual intervention.

Motorized lenses address this need in two key ways. First, they enable dynamic adjustment of field of view and focus, allowing a single system to zoom in for fine detail or zoom out for broader context while maintaining focus. Second, they provide remote operation of focus, zoom, and iris, eliminating the need for physical access to the camera and enabling real-time adjustments in hard-to-reach or automated environments.

Learn more here: Whitepaper: Intelligent Lens System Improves Integration in Machine Vision Systems | 日語 | 한국어

2. What are the benefits of calibrated lenses in automated systems?

Calibration data enables faster, more predictable lens behavior. Predefined relationships, such as focus-to-zoom tracking curves, allow the system to move directly to the correct focus position as zoom changes occur. In high-speed applications, this results in faster time to best focus.

Use of lens calibration curves does require an initial sensor-to-lens calibration procedure (back focal length calibration) on each camera-lens system to correct for small tolerances in sensor-to-lens position. Once the system calibration has been done, the calibrated focus-zoom curve can be used to quickly find best focus at any focal length and any object distance. This is helpful in systems deployed into dynamic environments with variable object distances.

For more information, visit: Intelligent Lenses or view videos on Theia's intelligent motorized lenses here:

1. What environmental conditions are considered during lens selection?

Lens selection plays a critical role in ensuring reliable performance in demanding industrial environments, particularly where temperature fluctuations, vibration and shock, and contaminants can significantly affect optical performance.

Learn more here:

Case Study: Ultra Wide, No Distortion Lenses Tough Enough for Crash Testing | 日語 | 한국어

Theia Vibration Shock Test Report

2. How do temperature and vibration affect lens performance?

Athermalized lens designs mitigate focus drift caused by temperature-induced changes in lens materials and housing. Ruggedized lens construction and secure mounting features prevent positioning changes and defocusing from vibration and shock generated by machinery or mobile platforms. In environments with dust, moisture, or exposure to cleaning processes, suitable lens construction and/or protective housings with appropriate IP ratings are essential for preventing contamination and ensuring long-term image quality and system reliability.

These considerations are especially important in robotics applications where robotic arms are constantly in motion, or in mobile robots navigating outdoor terrain in agricultural or excavation applications for example.

Learn more here:

Video: Air Combat USA [Theia's SY125 used in Aerospace]

Video: Tahoe demo HD [Theia's SL183 used in off-road application]

What factors matter most when selecting a machine vision lens?

There are many considerations for selecting the right sensor for the application. Selecting the right lens should receive equal consideration so that the high performance of the sensor is not limited by insufficient optical performance.

Lens selection involves balancing performance requirements with cost constraints. High performing lenses often require more complex designs, including additional aspherical optical elements, specialized materials, advanced coatings, motorization, and intelligence features.

Whitepaper: Key Considerations for Lens Selection in Video Surveillance Systems | 日語 | 한국어

However, in machine vision and robotics applications, investing in a higher-quality lens can make or break a system’s accuracy and reliability by reducing errors and increasing production rate. Conversely, selecting a lower-cost lens that limits performance may lead to higher overall system costs due to increased defects, maintenance downtime, or system rework.

By carefully considering sensor compatibility, optical performance, field of view, lighting, and environmental conditions, system designers can ensure that the lens supports overall system requirements for performance.

As automated systems continue to demand higher precision and greater flexibility, advances in lens technology, including intelligent motorized optics like Theia’s IQ Lenses™, are enabling new levels of advanced capability. Ultimately, the success of any imaging system comes down to the quality of the data it captures, making thoughtful lens selection essential.

Learn more about Theia’s related lens solutions at these links: